The annual examination results conducted by prominent boards across the nation have been announced. There is jubilation and amazement everywhere. I know no one, across all boards, who has ‘failed’, though some who were incapacitated or dreadfully unwell and unable to write the examinations. For such candidates, the result was recorded as PCNR, or Pass Certificate Not Awarded.

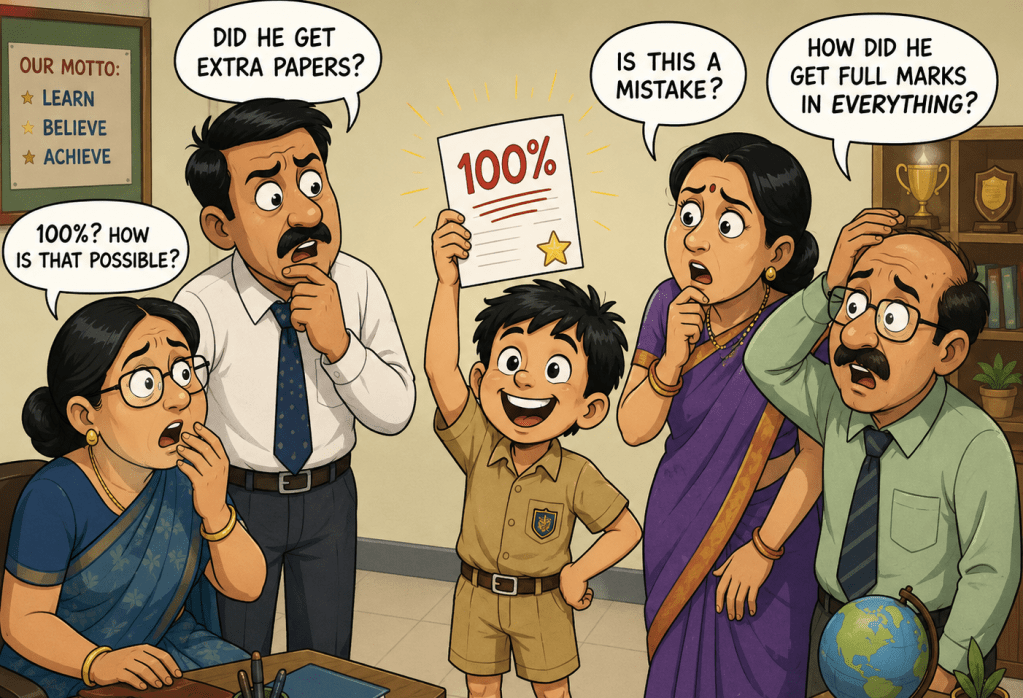

In the meantime, the circus is in full swing. Full-page newspaper advertisements announce the success of institutions; flexible vinyl hoardings with mug-shots of the centum achievers dot the city landscape; parents are literally weighed in fruits and sweets in some schools; teachers of examination classes receive significant cash awards; social media is abuzz with the success – good wishes and blessings pour forth.

In the midst of this euphoria, it might seem churlish to prick the bubble of individual and institutional conceit and ask the question … How much do our children really know?

If you are not a parent or actively involved in this national celebration, there are questions to be asked. At the moment, everyone seems happy: the advertising industry is happy, schools and teachers are happy, parents are happy, students are happy. But at the back of the minds of other stakeholders, such as institutions of higher learning and potential employers, the question still arises … How much do our children really know?

To be fair, many are already questioning the validity of the figures presented. The interpretation of the results is arcane – mysterious, secret and understood by only a few. It requires specialized knowledge that seems hidden or encoded to the average observer. The figures themselves are interchangeably described as scores, marks and grades, even though the terms are not synonyms. Each term carries a system of assessment or evaluation of its own.

It appears to be a national fraud, and definitely not in the national interest, that the inflation of numbers is studiously justified by the jargon of statistics. However, the amalgam of scores, marks and grades into a cocktail of achievement leaves the perspicacious doubting the verity of the system. This bubble must burst. Until then, everybody is happy.

More and more, analytical systems for competitive examinations have rejected the veracity of board examination results as markers of achievement. The meritocracy of numbers does not ensure admission into institutes of higher learning. The competitive examination season begins the moment board examination results are announced. There are cities, such as Kota, where the local economy thrives on promises of success through ‘coaching’ or cramming classes. In its extreme, this has led to a loss of life by suicide by young people, who could not withstand the pressure. Central Universities have their own entrance tests, while popular streams of education such as engineering, medicine and accountancy follow the same route of devising systems to actually test … How much do our children really know?

The numbers speak for themselves. Consider the published numbers of three examination boards with which we are familiar: The Central Board of Secondary Education (CBSE), the Council for the Indian School Certificate Examinations (CISCE); and the Uttar Pradesh Madhyamik Shiksha Parishad (U.P. Board). The results for 2026 are as follows:

| Board | Class 10 (Secondary) | Class 12 (Senior Secondary) |

| CISCE (ICSE/ISC) | 99.18% pass rate 258,721 appeared; 2,131 PCNR* | 99.13% pass rate 103,316 appeared; 902 PCNR* |

| CBSE | Pass percentage ~95% (millions appeared) | Class 12 results pending (over 18 lakh candidates) |

| UP Board (UPMSP) | 90.42% pass rate 27.5 lakh appeared; ~2.6 lakh failed | 80.38% pass rate 24.8 lakh appeared; ~4.9 lakh failed |

*Pass Certificate Not Awarded

To analyse the results of a chosen examination board (using the CISCE as an example), consider the following:

In the ISC (Class 12) Examinations 2026, out of 103,316 candidates who wrote the examination, a mere 902 candidates failed to receive a Pass Certificate. This does not indicate that they ‘failed’ the examination, but rather that, for various reasons a Pass Certificate Not Awarded (PCNR) was recorded against their names. The overall pass percentage was 99.13%.

In the ICSE (Class 10) Examinations 2026, out of 258,721 who appeared a total of 2,131 candidates failed to receive a Pass Certificate (PCNR). The overall pass percentage was 99.18%.

For all three examination boards, examiners evaluate answer scripts according to detailed rubrics set by a Moderator for each subject. This is vetted during a coordination meeting with team leaders of examiners.

Significantly, all three boards emphasize that the numbers recorded on certifications are percentages. Regrettably, there is no transparency in the manner in which these results are calculated. A percentage implies how much of the examination material was mastered. It is further implied that every candidate is evaluated uniformly, against the same maximum marks, making results comparable across years and schools.

Examination boards often shield themselves from criticism of inflated marks by claiming that the purpose of the boards is merely to certify completion of schooling. The emphasis is on whether a student has met minimum standards and thresholds (e.g. 33% or 40%), not on their relative standing or ranking. The blanket explanation given is that the marks have undergone a process of ‘moderation and standardisation’. Moderation and scaling are applied, ostensibly to ensure fairness across different examination papers and correction centres.

This veers sharply in the direction of a percentile system, which changes the dynamics of what the results are expected to reflect. Percentile ranks are seen by some as redundant and misleading. Yet, when moderation and scaling – using the formulae for the calculation of percentile – are passed off as a percentage, it is potentially misleading. This undermines clarity.

This abnormally high success raises questions about grade inflation. It also begs the question – Is such reportage academically ethical?

‘Standardisation’ or Academic Charlatanism?

Technically, a percentile could be presented as one of the other metrics described because they all often exist on a 0–100 scale. However, doing so is a fundamental misrepresentation of data and is considered academically dishonest. This is problematic and has serious ethical implications.

We are reminded that numbers often look the same. Without a clear key or legend – much like a map – the necessary context is lacking for interpretation.

If you score in the 90th percentile on a very difficult examination, but your actual percentage was only 62%, you might be tempted to tell someone you got a “90.” To the untrained eye, “90” looks like an A, whereas “62” looks like a D. However, both figures represent two completely different realities.

Centum Achievers

A common misreading of results is seen in the large number of examinees being awarded a centum in multiple papers of the examination. While achieving a perfect score of 100 marks in purely objective papers (such as mathematics, computer applications, sciences) is potentially possible, it once again begs the question as to how a centum can awarded be awarded for expressive, creative and subjective papers, such as Languages, Literature and Social Sciences where higher-order thinking questions are the norm.

Classifications in Evaluation

For the uninitiated, a quick study of the different terms used in evaluation (which are often used synonymously), is helpful:

1. A Raw Score

A Raw Score (or simply, score) is a direct measurement of a candidate’s performance based on set criteria, such as a Marking Scheme or ‘Key’. A score is objective. It is usually expressed as a number or a percentage of the total possible points. The raw score measures an individual’s accuracy or achievement.

For example: If a candidate answers 62 out of 100 questions correctly on a test, the candidates score is recorded as 62%. This does not change, regardless of how other candidates may have performed.

2. A Mark

A Mark is similar to a score, with the difference being that a mark may involve professional judgment. A teacher may decide that a piece of writing deserves an “A” even if the raw score is not perfect, because it meets qualitative standards of creativity or clarity. This is often subjective, especially for subjects where objective responses cannot be quantified, such as in languages and literature.

3. A Grade

A grade is a standardized symbol or letter used to represent a level of achievement within a specific system. It is a qualitative descriptor (like A, B, C or 1–10) based on a predefined scale.

For example: A score of 92% might be assigned a grade of “A”. This provides a quick, easy-to-understand summary of a student’s performance level. It does not reflect the candidate’s actual raw achievement.

4. A Percentage

A percentage is a way of expressing a score as a fraction of 100. It is an indicator of absolute performance.

For example: a candidate who scores 40 out of 50 has a percentage achievement of 80%. This helps to normalize scores so that performance can be compared across different tests (e.g., comparing a 10-point class test to a 100-point exam).

5. A Percentile Rank

A percentile rank (or simply, percentile) is a comparative statistic. It indicates the percentage of people in a group that the candidate performed better than.

It establishes the candidate’s rank relative to the total number of examinees. This is especially relevant in tests where the difficulty level might change every year. It is a statistical indicator of relative performance.

For example: If the candidate is placed in the 90th percentile, it indicates that the candidate scored HIGHER than 90% of the people who wrote the test. The candidate is placed in the bracket of the top 3% of the total number of examinees. It does NOT mean that the examinee answered 90% of the questions correctly.

The Ethical Perspective

In an academic or professional setting, misrepresenting a percentile as a score or percentage is unethical. There seems to be an underlying intention to deceive. Scores/Marks/Percentages measure competency (what you know), while percentiles measure competitiveness (how you compare). Falsifying the distinction is a deliberate attempt to hide the candidate’s actual level of mastery. While it is true that percentile calculations with very large numbers of examinees provide more reliable rankings, they also mask individual performance differences.

Academic integrity is at stake when percentiles are passed off as percentages and ranks as marks. This might be seen as wilful misrepresentation of academic records. In short, academic fraud. When a candidate seeking admission to an institution of higher learning is asked for their ‘score’ and a ‘percentile’ is provided instead, an incorrect data point is being provided that skews the evaluation process.

The candidates for the school-leaving examination are not to blame as there is no legend or key explaining the method of calculation of the figure provided. An observer is wilfully led to believe that the figure given is actually a percentage because the marksheet says so. How this ‘percentage’ was calculated cannot be verified!

Perceivable Danger

The danger of swapping the percentile rank for a percentage is evident by taking an example of extremes:

In the case of an ‘easy test’, a candidate might appear to be a genius, when their performance is actually average. The actual score achieved might be 97, though the percentile might only place the candidate at the 50th rank if many others did better.

In the case of a ‘difficult test’, a candidate might actually be at the top of the class even if they achieved an actual score of 39, which in many cases reflects failure. Nevertheless, the percentile rank could place the candidate at the 95th percentile.

In casual parlance, passing off a rank as a score is common. When formally recorded on a valid academic document, it is an ethical breach.

Suggested Solution

The solution to this lies in requiring explicit statements on official documents that combine individual achievement with comparative positioning. The honest way to present this is to list both:

“Scored 75% in the examination, placing the candidate in the 99th percentile.”

This will indicate both the candidate’s absolute performance and their standing without being deceptive. Until then, there will be generations of examinees for whom their marksheet will be a simple certification of having written an examination, without the crucial information of individual achievement. A wag once claimed that the Grade 10 certificate is only useful to legally record a candidate’s date of birth!

Leave a comment